For decades, editing a photo well meant mastering the technical language of design software. Layers, masks, curves and colour adjustments. Now a new generation of AI tools inside Adobe Photoshop and Adobe Firefly is flipping that process on its head. And, allowing users to edit images simply by describing the change they want.

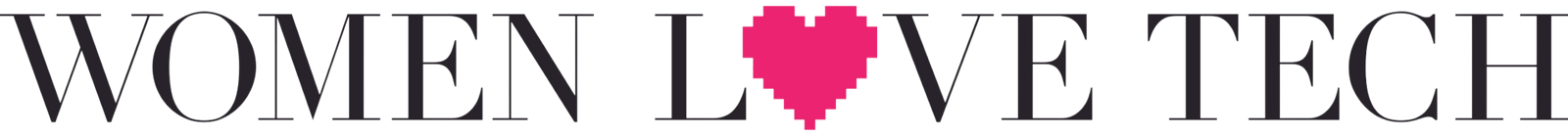

At the heart of this shift is Photoshop’s new AI Assistant, now in public beta for web and mobile. Instead of navigating menus and sliders, you just tell the assistant what you’d like to change. Want to remove strangers from a holiday snapshot, brighten a sunset, or swap a dull background for something more dramatic? Just type or speak your request, and the AI handles the rest.

The assistant can work automatically or guide you step by step, which makes it useful for everyone from students dabbling in digital art to marketers preparing eye-catching visuals on the fly. Editing on your phone while commuting? You can literally say, “soften the background and boost the lighting,” and watch Photoshop interpret your words in seconds.

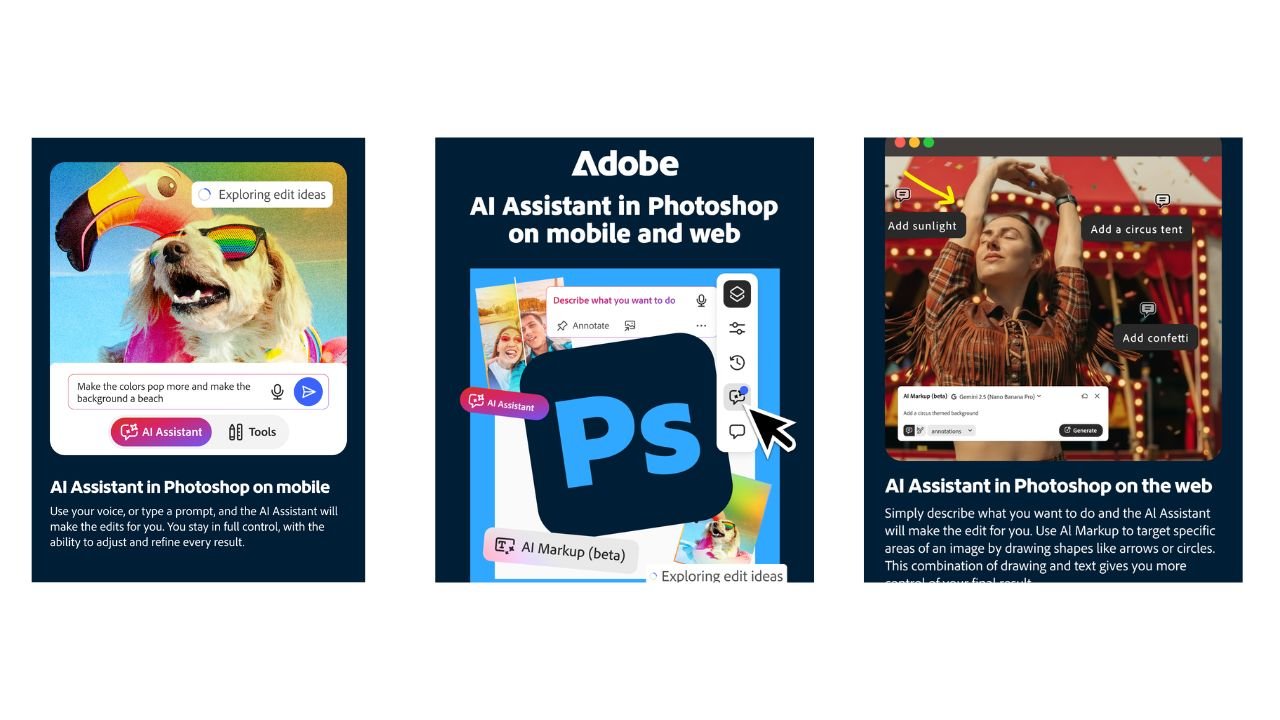

Adobe has also introduced AI Markup, a tool that allows you to draw directly on your image and add prompts to specific areas. Circle an empty patch of garden and type “add wildflowers,” or outline a skyline and request “distant mountains.” Within moments, Photoshop generates context-aware edits that blend seamlessly into your photo. It feels less like traditional editing and more like directing a visual idea.

Meanwhile, Adobe Firefly, the company’s dedicated AI image studio, has received a major refresh. Its all-in-one editor now lets you add or replace objects with Generative Fill, remove distractions with Generative Remove, expand images to new sizes with Generative Expand, sharpen resolution using Generative Upscale, and remove backgrounds in a single click. Essentially, it gives you professional-grade control without the steep learning curve.

Firefly is also built for experimentation. You can try out over 25 different AI models, including options from OpenAI, Google and Runway. All without leaving the editor. This means you can explore different styles, generate multiple variations, and refine images in one continuous workflow.

Another game-changing feature is unlimited generations. Paid users of Photoshop on web and mobile can now experiment without worrying about limits, and Firefly users already enjoy unlimited generations. Whether you’re creating social media content, album art, or just perfecting a holiday photo, this freedom encourages risk-taking and creativity – a rare thing in traditional image editing software.

What’s exciting is how approachable all of this feels. These tools aren’t just for designers or professional photographers. They’re built for anyone who wants to make their visuals pop, from travellers editing holiday snaps, to students experimenting with digital art, to marketers refining brand imagery. You don’t need years of training – you just need to know how to describe the picture you want.

The wider trend here is clear: AI is no longer just a back-end tool quietly running behind the scenes. It’s becoming a creative partner, embedded directly in the apps people use every day. By lowering technical barriers and making experimentation easy, tools like Photoshop’s AI Assistant and Firefly are redefining what it means to be creative in 2026.

Editing a photo doesn’t have to be intimidating anymore. Now, it can be intuitive, playful, and fast – almost like having a co-creator that understands your vision. From refining portraits to turning a simple vacation snap into a vibrant, polished memory, these AI tools are opening doors to creativity for anyone willing to give words a little power.