If you’ve ever hovered awkwardly outside your teenager’s bedroom door, wondering whether to knock or not to knock, you’ll know this: modern parenting often feels like trying to decode a Wi-Fi password you didn’t set. Our children live large portions of their lives online, particularly on platforms like Instagram, and as much as we’d love to pretend otherwise, that digital world shapes their real one.

This week, the app many of our teens scroll before breakfast and after lights out announced a new feature designed to help parents step in when it truly matters. In the coming weeks, parents in Australia, the US, the UK and Canada who use Instagram’s supervision tools will begin receiving proactive alerts if their teen repeatedly searches for terms clearly associated with suicide or self-harm within a short period of time.

It’s a big move. And one that sits at the intersection of technology, trust and some very tender family conversations.

Not a punishment, but a prompt

Let’s start with what this isn’t. These alerts are not about punishing teenagers, policing every passing thought, or turning parents into digital detectives. Instagram has made it clear that the intention is to support families — not to penalise young people who may be quietly struggling.

If a teen repeatedly searches for phrases promoting suicide or self-harm, or for terms such as “suicide” or “self-harm”, the system will trigger a notification. More ambiguous searches about anxiety or depression on their own won’t set off an alert. In other words, the threshold is deliberately set high: it requires several searches within a short window, and the phrases must be clearly associated with self-harm or suicidal ideation.

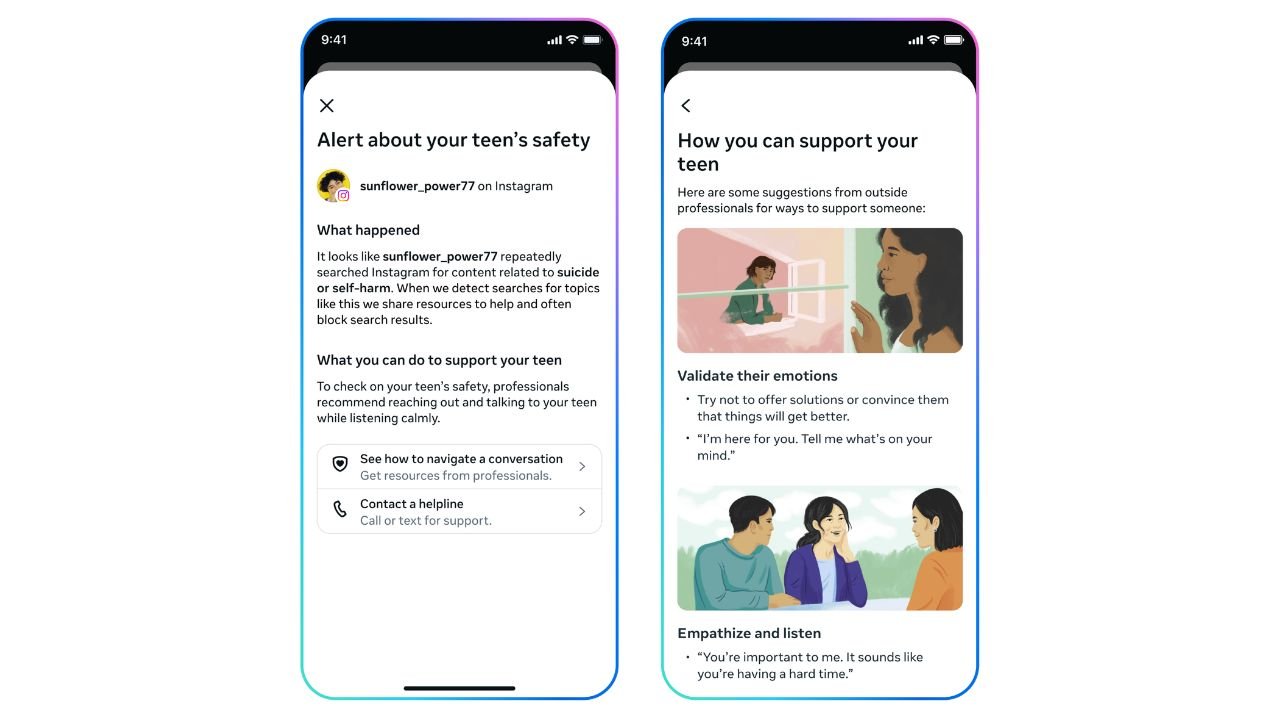

Parents enrolled in supervision will receive an in-app notification, as well as an email, SMS or WhatsApp message (depending on the contact details provided). The alert explains that repeated searches have occurred and includes expert-backed guidance on how to approach a supportive conversation offline.

And that final word — offline — is key.

A nudge towards real-world conversations

As much as teens live on their screens, the support they need most often doesn’t. The real power of this feature lies not in the notification itself, but in what it might spark at the kitchen table, in the car on the way to footy practice, or during a late-night cup of tea.

Dr Sameer Hinduja, Co-Director of the Cyberbullying Research Center, described empowering a parent to step in at such moments as “extremely important”. Vicki Shotbolt, CEO of Parent Zone, called it a “really important step” towards giving parents greater peace of mind.

Both sentiments reflect a broader truth: when it comes to youth mental health, silence is rarely helpful. Yet many parents fear saying the wrong thing, overreacting, or inadvertently pushing their child further away. By pairing alerts with practical, expert-backed advice, the platform is attempting to lower that barrier.

The hope is that instead of confrontation — “Why on earth are you searching that?” — the conversation becomes curiosity and care — “I got a notification and I just want to check in. How are you really doing?”

Striking a delicate balance

Of course, any feature that monitors teen behaviour raises questions about privacy and autonomy. Adolescence is, after all, a time for exploration, identity-building and, occasionally, googling things that make adults uncomfortable.

Instagram says it consulted with experts from its Suicide and Self-Harm Advisory Group and analysed search behaviour to find a balanced threshold. The aim is to avoid unnecessary notifications that could desensitise parents, while still erring on the side of caution.

Will there be moments where a parent receives an alert and discovers their teen was researching a school assignment or reading about a news story? Possibly. But the platform’s position is that a false alarm is preferable to missing an opportunity to support a young person who may genuinely be in distress.

It’s also worth noting that the majority of teens do not search for this type of content. When they do, Instagram’s existing policy is to block such searches and redirect users to support resources and helplines rather than displaying harmful material.

Building on existing protections

These new alerts don’t exist in isolation. Instagram already hides content about suicide and self-harm from teens, even if it’s shared by someone they follow, and blocks search terms that clearly violate its policies. In those instances, users are directed towards local organisations and support services instead of results.

The company also continues to alert emergency services if it becomes aware of someone at imminent risk of physical harm — interventions that, according to the platform, have saved lives.

What’s particularly interesting is that this is only the beginning. Later this year, the company will expand similar parental notifications to certain AI interactions. As more young people turn to AI chat experiences for advice, comfort or simply someone to “talk” to at 11pm, the company says it is building safeguards to notify parents if a teen repeatedly attempts to engage in conversations related to suicide or self-harm with its AI systems.

In other words, the digital safety net is widening.

Parenting in the push-notification era

For families, this announcement invites reflection as much as reaction. Technology is not a substitute for connection — but it can be a bridge. Used thoughtfully, tools like supervision settings and proactive alerts can complement, rather than replace, trust and communication.

If you’re a parent of a teen, this might be the nudge to revisit your household’s digital agreements. Are you enrolled in supervision? Have you talked openly about how online searches are handled? Does your teen know that your priority is their wellbeing, not their punishment?

And if you’re raising a child in 2026, perhaps the most reassuring takeaway is this: the platforms our teens use every day are beginning to recognise that they, too, have a role to play in supporting young people’s mental health.

Parenting has always involved reading between the lines — a slammed door, a quiet dinner, a sudden change in mood. Now, occasionally, it may also involve reading a notification. What matters most is what happens next: a knock on the door, an open question, and a willingness to listen.

Because behind every search bar is a young person navigating big feelings in a small body. And sometimes, all they need is to know that someone is paying attention — gently, lovingly, and at just the right moment.